Introduction: LLM APIs have strict rate limits—requests per minute, tokens per minute, and concurrent request limits. Exceeding these limits results in 429 errors that can cascade through your application. Effective rate limiting on your side prevents hitting API limits, provides fair access across users, and enables graceful degradation under load. This guide covers practical rate […]

Read more →Category: Emerging Technologies

Emerging technologies include a variety of technologies such as educational technology, information technology, nanotechnology, biotechnology, cognitive science, psychotechnology, robotics, and artificial intelligence.

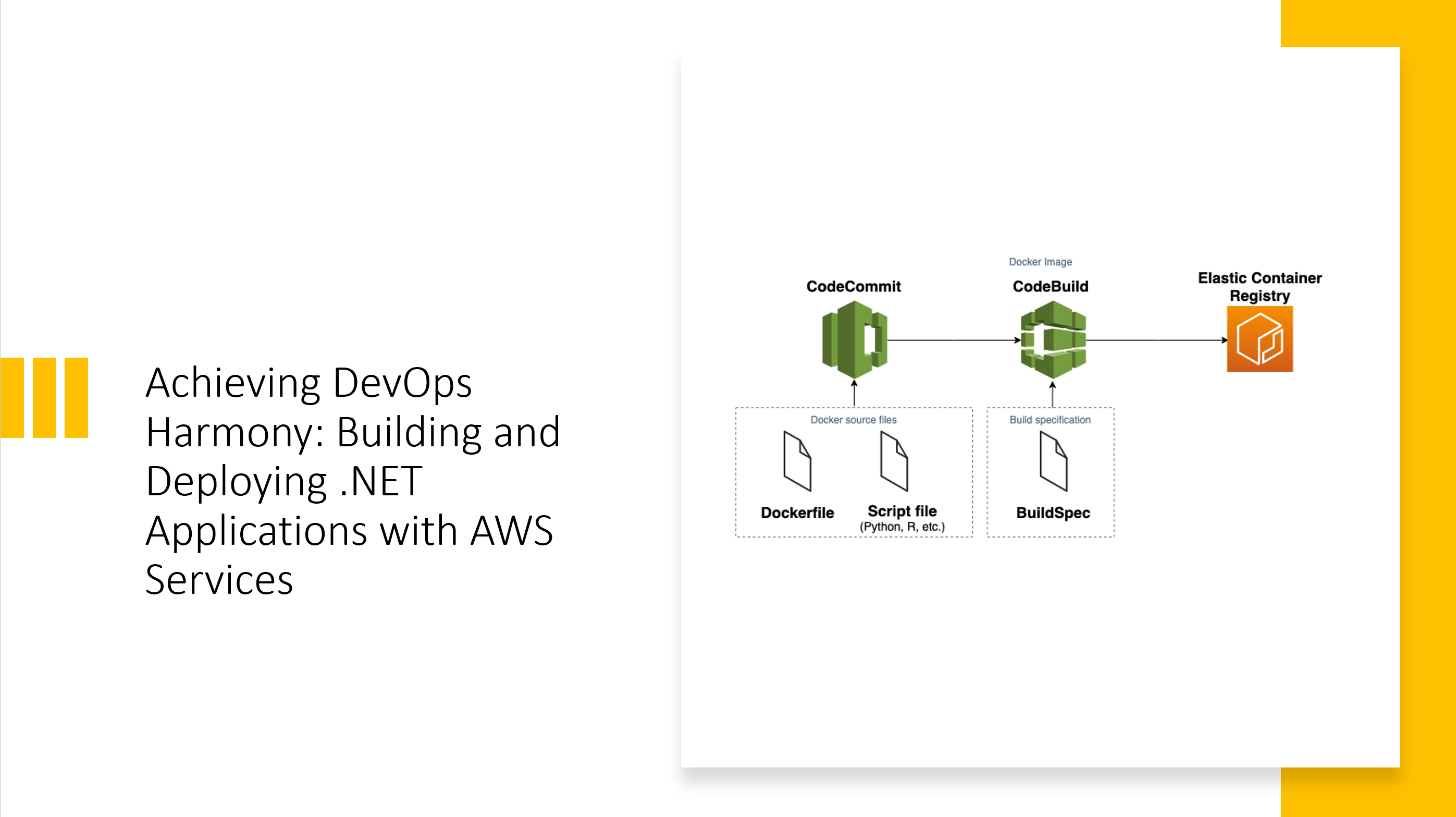

Achieving DevOps Harmony: Building and Deploying .NET Applications with AWS Services

The Evolution of .NET Deployment on AWS After two decades of building enterprise applications, I’ve witnessed the transformation of deployment practices from manual FTP uploads to sophisticated CI/CD pipelines. When AWS introduced their native DevOps toolchain, it fundamentally changed how we approach .NET application delivery. The integration between CodeCommit, CodeBuild, CodePipeline, and ECR creates a […]

Read more →LLM Security: Understanding Prompt Injection, Jailbreaking, and Attack Vectors (Part 1 of 2)

A comprehensive guide to securing LLM applications against prompt injection, jailbreaking, and data exfiltration attacks. Includes production-ready defense implementations.

Read more →LLM Batch Processing: Scaling AI Workloads from Hundreds to Millions

Introduction: Processing thousands or millions of items through LLMs requires different patterns than single-request applications. Naive sequential processing is too slow, while uncontrolled parallelism hits rate limits and wastes money on retries. This guide covers production batch processing patterns: chunking strategies, parallel execution with rate limiting, progress tracking, checkpoint/resume for long jobs, cost estimation, and […]

Read more →The Future of Work: How AI and Automation Are Reshaping Careers

After two decades of architecting enterprise systems and leading digital transformation initiatives across financial services, healthcare, and technology sectors, I’ve witnessed firsthand how AI and automation are fundamentally reshaping the nature of work. This isn’t merely about replacing tasks—it’s about reimagining entire value chains, creating new categories of roles, and demanding a fundamental shift in […]

Read more →LLM Output Formatting: JSON Mode, Pydantic Parsing, and Template-Based Outputs

Introduction: LLM outputs are inherently unstructured text, but applications need structured data—JSON objects, typed responses, specific formats. Getting reliable structured output requires careful prompt engineering, output parsing, validation, and error recovery. This guide covers practical output formatting techniques: JSON mode and structured outputs, Pydantic-based parsing, format enforcement with retries, template-based formatting, and strategies for handling […]

Read more →