In late 2024, when AWS announced Amazon Bedrock Flows, my immediate thought was shared by almost every enterprise architect I know: “Did AWS just rebuild Step Functions for GenAI?” The visual node builder, the conditional routing, the variable passing—it looked suspiciously like a lightweight, AI-specific clone of the most established orchestration engine on the cloud.

Now, 15 months into general availability, and after migrating dozens of complex LLM pipelines for clients, the distinction has become painfully clear: Bedrock Flows is incredibly powerful, but if you treat it as a direct replacement for AWS Step Functions, your production workloads will fail under operational load. This article details the hard-earned lessons of orchestrating Generative AI architectures on AWS. It defines exactly what Bedrock Flows is, where it mathematically out-competes everything else to rapidly deploy RAG pipelines and prompt chains, and the exact architectural boundaries that dictate when you must integrate or fallback to the heavyweight durability of AWS Step Functions.

What Amazon Bedrock Flows Actually Is

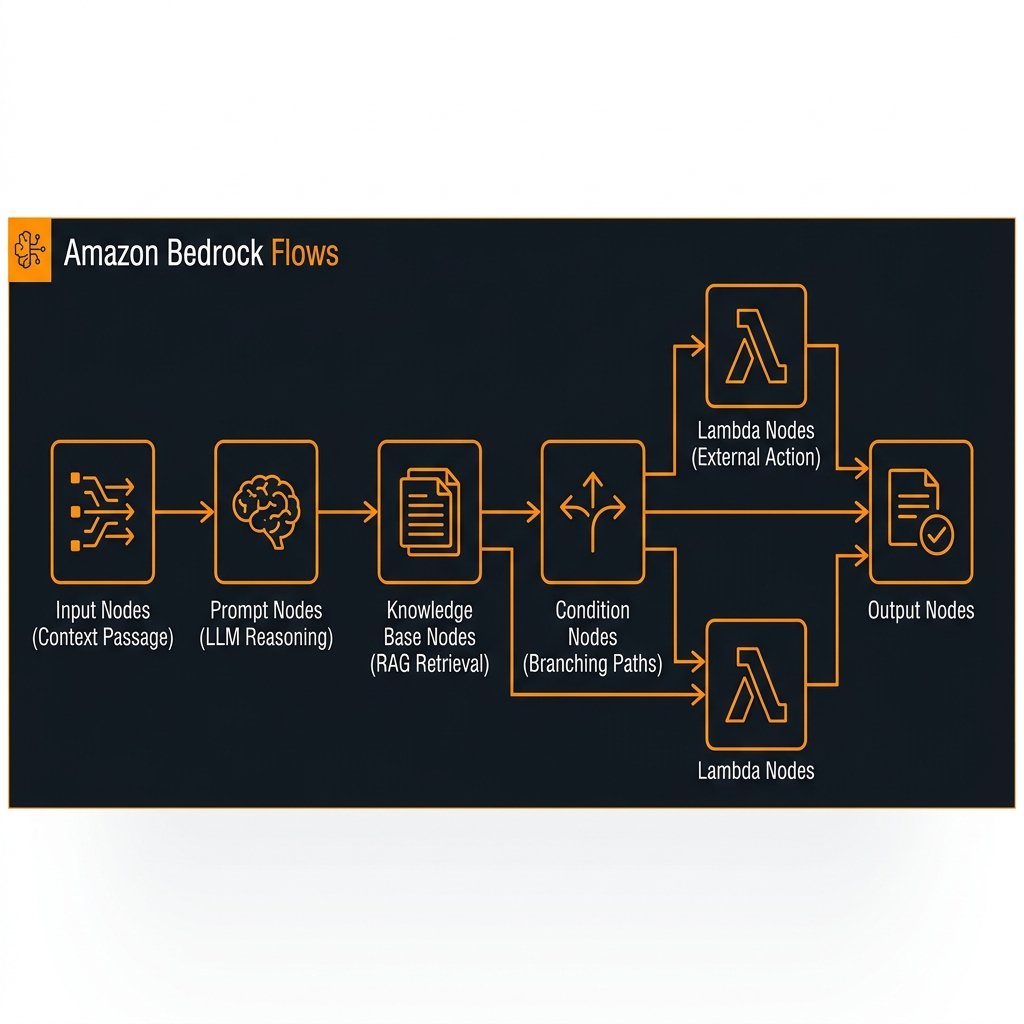

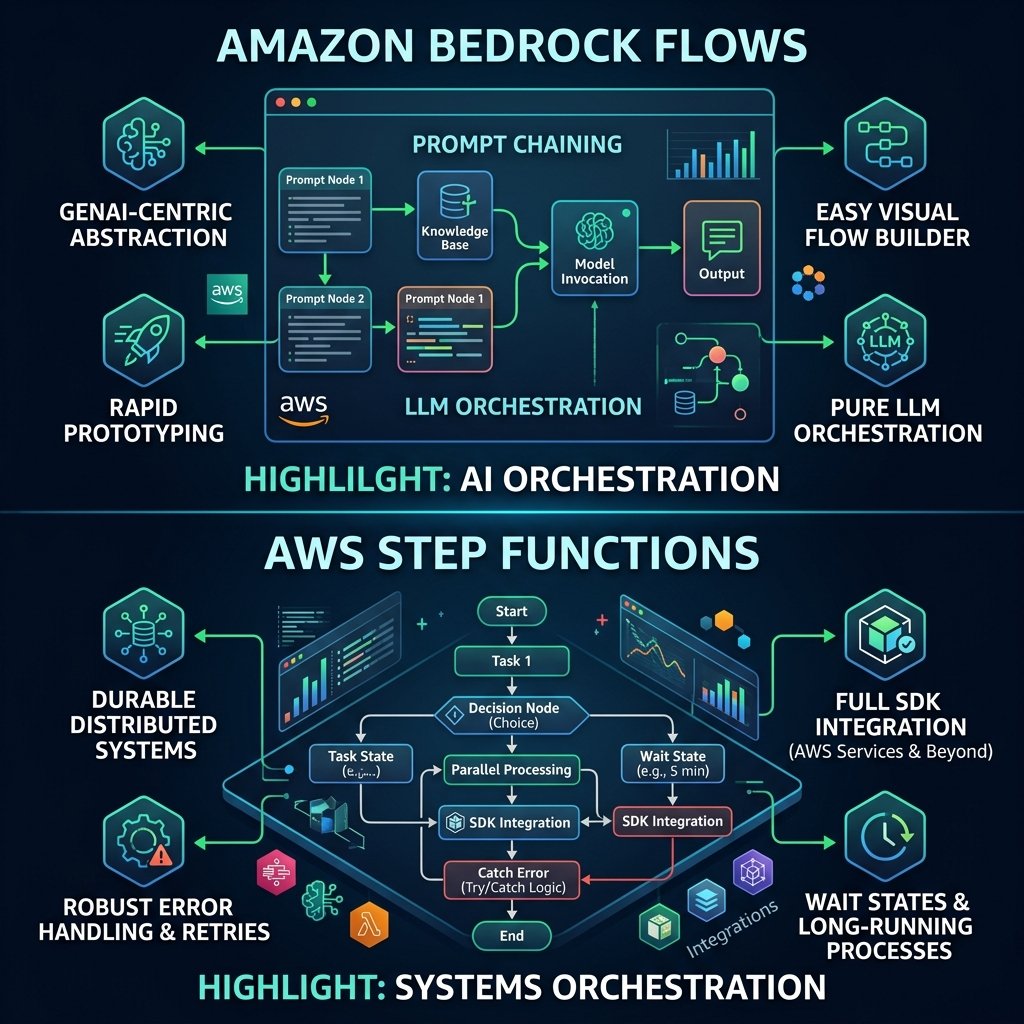

Bedrock Flows is a visually-driven, node-based orchestration layer tightly coupled to the Amazon Bedrock ecosystem. Designed for rapid prototyping and deployment of GenAI workflows, it abstracts the connective tissue needed to chain Foundation Models, Knowledge Bases for Retrieval-Augmented Generation (RAG), external APIs, and local Lambda functions together.

When writing an AI agent in raw Python using LangChain, you have to manually handle passing intermediate LLM generations as standard input to the next LLM call, string manipulating prompt templates, parsing JSON strings sent back from the model, and configuring memory. Bedrock Flows turns this code-heavy setup into a drag-and-drop graph interface within the AWS Console.

The Primary Capabilities: Prompt Chaining and RAG

Flows excels at Prompt Chaining. A typical pipeline might look like this: A User Input Node connects to an LLM Prompt Node instructed to classify the user’s tone. That output feeds into a Condition Node. If the tone is “angry”, it routes to a specific LLM Prompt Node designed for de-escalation; otherwise, it hits a Knowledge Base Node to look up technical documentation, and passes those retrieved facts to a final Generation Node.

flowchart TD

IN[Flow Input] --> PROMPT1(Classifier LLM Node)

PROMPT1 --> COND{Condition Node}

COND -->|Angry| PROMPT2(De-escalation LLM)

COND -->|Neutral| KB[Bedrock Knowledge Base Node]

KB --> PROMPT3(RAG Gen LLM)

PROMPT2 --> OUT[Flow Output]

PROMPT3 --> OUTBuilding the above architecture programmatically would require substantial Python scaffolding. In Bedrock Flows, it takes less than 15 minutes to wire together, test directly in the console editor, and publish to a new version alias via API.

import boto3

bedrock_agent_runtime = boto3.client('bedrock-agent-runtime', region_name='us-east-1')

def invoke_bedrock_flow(flow_id: str, flow_alias_id: str, prompt: str):

"""

Invokes an active Bedrock Flow programmatically.

Unlike Step Functions, the execution is entirely synchronous

for the typical prompt chaining lifecycle.

"""

response = bedrock_agent_runtime.invoke_flow(

flowIdentifier=flow_id,

flowAliasIdentifier=flow_alias_id,

inputs=[

{

"content": {

"document": prompt

},

"nodeName": "FlowInputNode",

"nodeOutputName": "document"

}

]

)

# The output is returned via an event stream. Unpacking it:

for event in response.get('responseStream'):

if 'flowOutputEvent' in event:

return event['flowOutputEvent']['content']['document']

return None

The Crucial Limit: Where Flows Stops and Step Functions Begins

Because Bedrock Flows presents a graph-based workflow layout, developers immediately try to use it as a Business Process orchestration tool. They insert Lambda nodes that write to DynamoDB, trigger email sending through SES, wait for user approvals, and update customer balances.

This is an architectural anti-pattern that leads to disastrous systemic fragility.

Flows is an AI Orchestrator. Step Functions is a Systems Orchestrator.

Bedrock Flows is fundamentally a synchronous, short-lived compute wrapper. When you call `invoke_flow()`, an internal request starts processing across the nodes. If any Node significantly times out, or if the process drags on past standard API Gateway limits, the execution dies. There are no wait states (you cannot pause a Bedrock Flow for 24 hours waiting for a human to click an email link). There is no “Retry on 503 Internal Error with Exponential Backoff” configuration for a Lambda Node inside a Flow. There is no dead-letter queue (DLQ) if a prompt generation completely corrupts.

Conversely, AWS Step Functions handles durable, distributed state. A Standard Step Function can run for 1 year. Express Step Functions run for 5 minutes but process tens of thousands of requests per second with strict Exactly-Once or At-Least-Once execution guarantees, native SDK integrations to virtually every AWS service, Task Tokens for manual callback interruptions, and granular exception catching (`States.Timeout`, `States.TaskFailed`).

The Enterprise Solution: The Hybrid Orchestration Pattern

For true enterprise-grade GenAI applications, you do not choose between Bedrock Flows and Step Functions—you compose them. Step Functions controls the durable business logic and Bedrock Flows encapsulates the complex, volatile prompt-chaining and AI intelligence logic.

Consider a highly-regulated Enterprise Loan Approval AI system. Step Functions starts the execution, pulls the original user ID, logs the transaction start, fetches the raw documents from S3, and then triggers a Lambda function that calls a specific Bedrock Flow. The Bedrock Flow is responsible for extracting the data, querying the policy Knowledge Base, chaining Prompts together to evaluate the risk, and returning a strict JSON payload. Step Functions receives this JSON, natively parses it, evaluates the risk threshold, and triggers a durable human-approval Task Token or writes the final commit to RDS securely.

sequenceDiagram

participant SF as AWS Step Functions

participant L as Execution Lambda

participant BF as Bedrock Flows

participant KB as Bedrock Knowledge Base (RAG)

participant FM as Foundation Models (Claude 3.5)

SF->>SF: Initialize Transaction (Audit Log to DDB)

SF->>L: Invoke Lambda with Document Context

L->>BF: invoke_flow(Context)

rect rgb(20, 30, 40)

Note over BF, FM: Embedded AI Prompt Chaining

BF->>FM: Extract PDF Text

FM-->>BF: Text

BF->>KB: Retrieve Loan Policies

KB-->>BF: Policy Snippets

BF->>FM: Evaluate Text vs Policies

FM-->>BF: Risk Assessment (JSON)

end

BF-->>L: Return Clean JSON Output

L-->>SF: Task Success (Payload)

SF->>SF: Conditional Branch (High Risk?)

SF-->>SF: Write Final Result to RDSThis hybrid pattern gives your data scientists and AI engineers the freedom to constantly tweak, test, and version the complex Bedrock Flow through the drag-and-drop conversational console, entirely decoupled from the core transactional backend. The software engineers managing the Step Function state machine don’t need to care about temperature tweaks or RAG reranking logic; they simply treat the Bedrock Flow as an opaque, deterministic Lambda task that yields a defined JSON output.

Decision Matrix Framework

To quickly decide when to deploy Bedrock Flows versus Step Functions (or how to compose them), use this evaluation rubric:

- Use Bedrock Flows exclusively if: The task is purely text generation, summarization, prompt chaining, or basic RAG document retrieval. The payload response must be returned synchronously to a waiting client (like a Chat UI). No mutation or transactional data creation takes place.

- Use Step Functions exclusively if: The workflow involves multiple wait states (hours or days), relies on integrations across AWS services (SQS, SNS, Glue, Batch) rather than just language models, requires retry/catch logic on failure, or executes financial/database transactions requiring strict idempotency.

- Use the Hybrid Pattern if: You need complex generative AI reasoning (Prompt Chaining, RAG) driving a robust business process that interacts transactionally with long-running databases, humans in the loop, or multi-step, durable processing requirements that exceed 5 minutes.

Key Takeaways

- Bedrock Flows abstract the GenAI “glue” code. It replaces repetitive, fragile Python scripting used for prompt chaining, string parsing, and sequential LLM calls by providing a robust graphical interface and an automated execution graph.

- Flows are synchronous orchestrators. They are not designed to endure long wait times, handle vast serverless timeouts, or manage durable application state.

- Do not write transactions inside a Bedrock Flow. Using the internal Lambda Node to execute critical database writes is structurally dangerous as Flows lack complex rollback/retry exception catchers compared to Step Functions.

- Adopt the Hybrid Pattern for Enterprise Workloads. Let Bedrock Flows manage cognitive prompt chaining, while managing the workflow pipeline, human reviews, and database mutability durably with AWS Step Functions.

- Use Aliases to isolate deployment risk. By versioning your visual Bedrock Flows, AI engineers can experiment aggressively in dev aliases while production Step Functions statically reference live, stabilized prompt chains.

Glossary

- Amazon Bedrock Flows

- A managed capability in Amazon Bedrock providing a visual, node-based workspace to construct, test, and deploy generative AI workflows combining Foundation Models, prompts, and APIs.

- Prompt Chaining

- The practice of feeding the output of one Large Language Model (LLM) interaction directly into the prompt of a subsequent LLM interaction to break a complex problem down into sequential logical steps.

- AWS Step Functions

- A serverless, durable orchestration service in AWS used to design and execute complex workflows that integrate with nearly every AWS service, utilizing try/catch error handling, retry backoffs, and wait states.

- Node-Based Architecture

- A visual programming interface where functional units (Nodes)—such as a Prompt Node, Condition Node, or Knowledge Base Node—are connected by edges to pass output keys directly as input variables to downstream logic.

- Hybrid Pattern

- An enterprise architecture design where lightweight, cognitive logic (like RAG extraction chains) runs in Bedrock Flows, but is triggered collectively by an overarching, highly durable AWS Step Functions workflow determining business context.

- Idempotency

- The property of an operation ensuring that making multiple identical requests has the same systemic effect as making a single request—crucial when designing resilient state machines outside of LLM generations.

References & Further Reading

- → Amazon Bedrock Flows Developer Guide— Official documentation profiling Node configurations, testing suites, and alias versioning deployments.

- → AWS Step Functions Product Hub— Overview of state machine orchestration, Express vs Standard workflows, and SDK-driven integrations.

- → Building Gen AI Workflows with Amazon Bedrock Flows— AWS coverage of basic prompt chaining workflows using visual diagramming inside the Bedrock console.

- → Bedrock Runtime API – InvokeFlow— Technical API reference for synchronously invoking a deployed Bedrock Flow alias using the AWS SDK.

Discover more from C4: Container, Code, Cloud & Context

Subscribe to get the latest posts sent to your email.