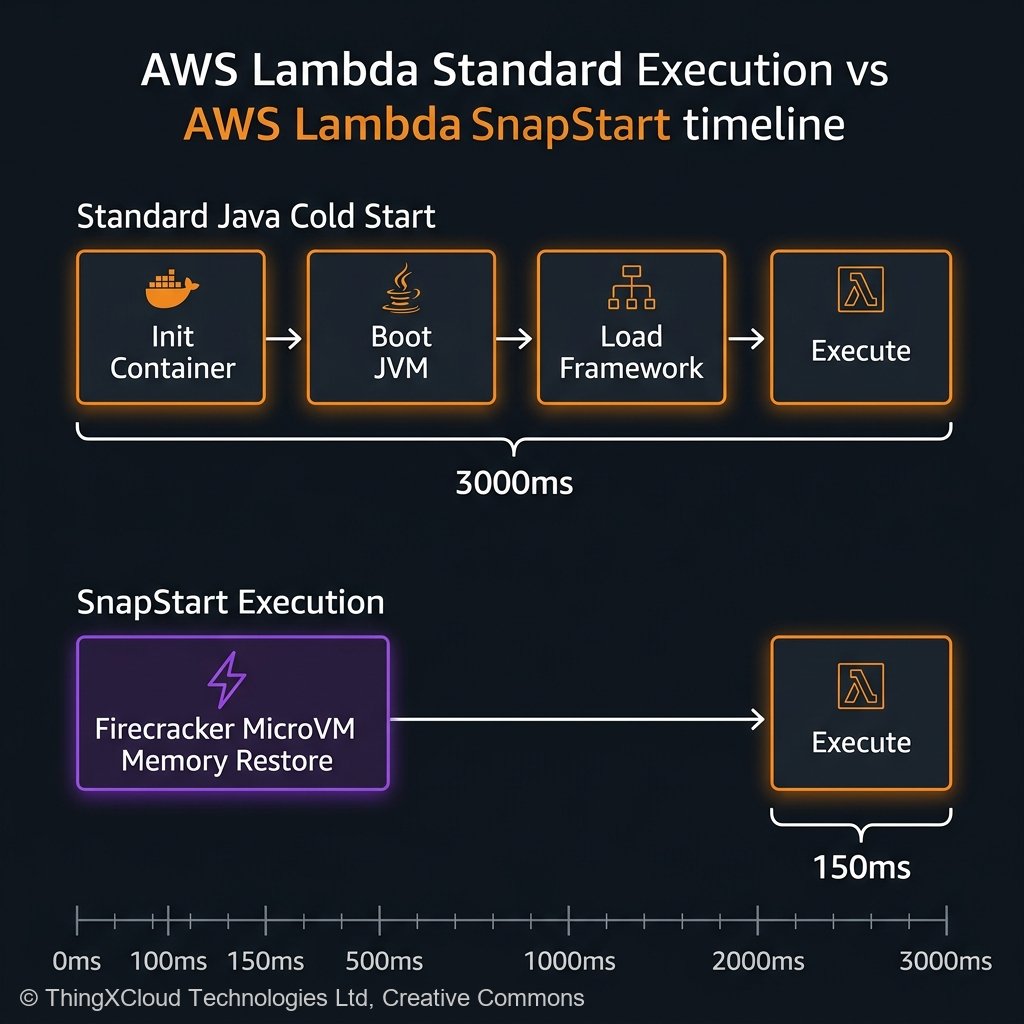

For nearly a decade, enterprise architects avoided migrating heavily coupled Java Virtual Machine (JVM) or .NET CLR workloads into AWS Lambda. The mathematics were punishingly strict: when a user payload hit an idle Lambda endpoint, AWS had to provision the Firecracker microVM, securely pull the deployment container, load the heavy JVM into RAM, and execute the complex Spring Boot or C# Entity Framework class loaders. This initialization sequence, universally dubbed the “Cold Start,” frequently took between 3 to 7 seconds.

In a real-time web application or synchronous API structure, executing a 7-second pause before even evaluating a single byte of business logic is a catastrophic failure condition. Historically, engineering teams band-aided over this flaw by writing continuous “ping” scripts to keep the Lambdas artificially warm, or paying exorbitant monthly baseline prices for Provisioned Concurrency across thousands of serverless endpoints.

By mid-2026, those archaic architectural hacks have been unequivocally deprecated. The enterprise baseline for executing heavy statically typed ecosystems on AWS effectively rides completely on **AWS Lambda SnapStart**. By leveraging sophisticated memory snapshotting via Firecracker microVMs, SnapStart mechanically crushes initialization delays by 90%, empowering monolithic 2GB Java endpoints to respond locally from 0 to 1 in under 150 milliseconds. This deep-dive explores the precise execution mechanics and the critical UUID security pitfalls of adopting state-restoration at scale.

The Firecracker MicroVM Execution Theory

To understand how SnapStart attains its blistering performance curve, one must differentiate between deploying code versus deploying state.

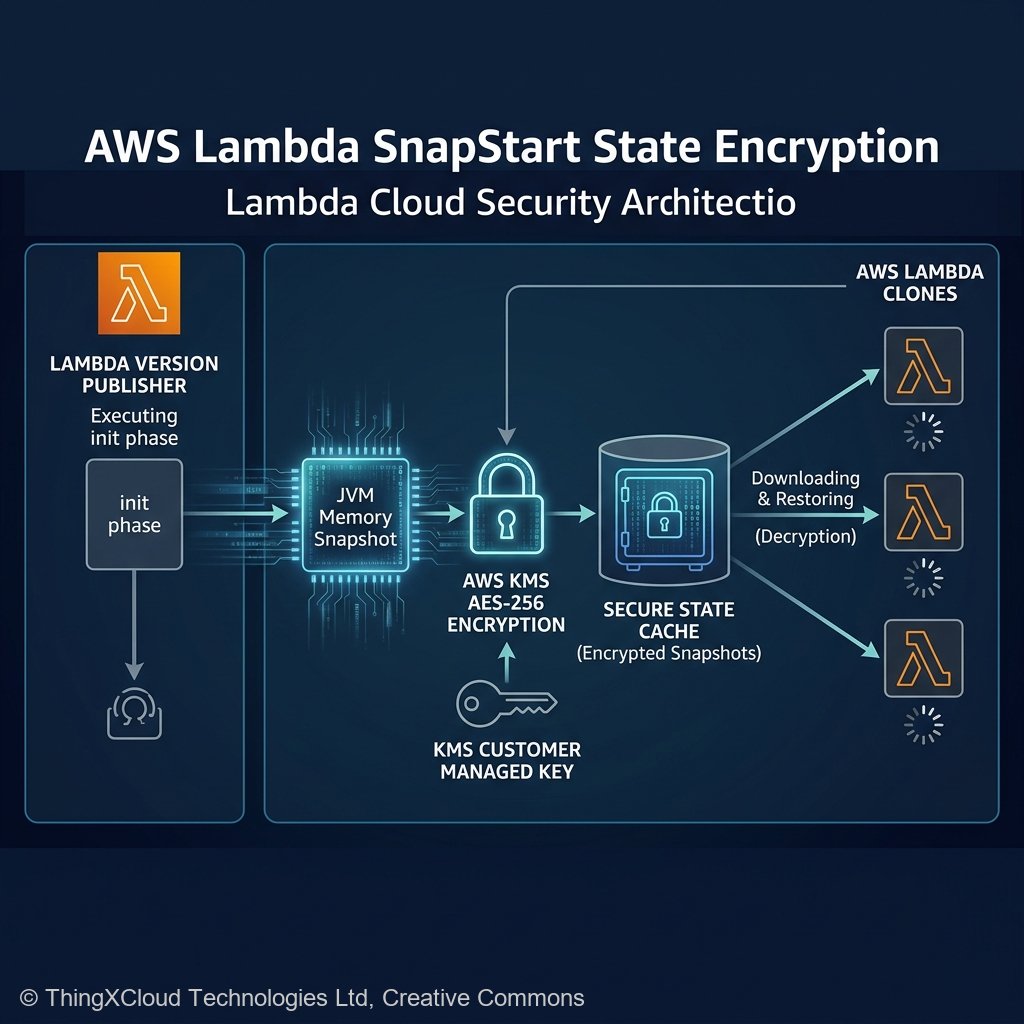

When a developer physically publishes a new *Version* of an AWS Lambda specifically configured with SnapStart enabled, the AWS control plane intercepts the typical deployment. Instead of merely storing the `.zip` or container artifact in an S3 bucket, AWS preemptively boots up the Lambda function within a secure, isolated sandbox.

In this isolated environment, AWS systematically executes the entire initialization sequence: it starts the JVM, loads all heavy dependency objects, establishes static variable matrices, and configures the memory heap exactly as it would appear one microsecond before processing a live client HTTP request. The system then mechanically halts execution.

Utilizing the underlying hypervisor (AWS Firecracker), the backend algorithm takes an encrypted, binary snapshot of the functional microVM’s entire memory map and operating system configuration. This resulting hyper-dense snapshot is permanently serialized and cryptographically assigned to the specific published Lambda version.

flowchart LR

A["Developer Publishes Version"] --> B{"SnapStart Init Phase"}

B --> C["Boot JVM & Load Frameworks"]

C --> D["Execute CRaC Hooks (Optional)"]

D --> E["Firecracker Snapshot Created"]

E --> F[("Encrypted Amazon S3 Cache")]

subgraph "Live Client Invocation"

G[["Client Request"]] --> H["Pull Snapshot from Cache"]

H --> I["Restore Memory Map (150ms)"]

I --> J["Handler Code Execution"]

endThe Restoration Sequence

When unpredictable traffic spikes hit the API Gateway three weeks later requiring 500 concurrent container clones, the system no longer boots 500 independent JVM environments. Instead, the AWS Lambda control plane rapidly streams the pre-compiled, statically warmed memory snapshot from its SSD storage array directly across the host execution boundaries, mapping the identical data topology into 500 separate memory spaces instantaneously.

The Entropy Problem: Breaking PRNGs

Dumping and restoring an exact point-in-time memory topography fundamentally destroys the mathematical concept of Randomness. This creates an existential threat for enterprise encryption architecture resulting from weak Pseudo-Random Number Generators (PRNG).

Consider a static Java block utilizing a baseline seeded `SecureRandom` class designed to generate distinct, collision-free transaction UUID identifiers during the execution phase. In a standard multi-JVM environment, the system clock feeds uniquely localized thermodynamic entropy into the boot sequence, guaranteeing mathematically diverse UUIDs across multiple machines.

// CRITICAL FLAW under SnapStart

public class TransactionGenerator {

// This PRNG executes DURING THE SNAPSHOT PHASE, locking the seed natively into memory.

private static final SecureRandom prng = new SecureRandom();

public String generateUniqueTransaction() {

return UUID.nameUUIDFromBytes(prng.generateSeed(16)).toString();

}

}

Under SnapStart, the `SecureRandom` instance was instantiated and seeded inside the AWS Sandbox *before* the snapshot was captured. When 500 cloned Lambda functions independently boot back up utilizing identical memory maps, all 500 parallel functions logically believe they possess the exact same sequential pseudo-random seed state. If multiple concurrent clients strike your API simultaneously, the 500 mathematically independent execution requests will shockingly generate the exact same “random” Transaction UUID array, resulting in catastrophic database `PrimaryKey` collision explosions across your persistence layer.

Coordinated Restore at Checkpoint (CRaC)

Understanding what happens conceptually inside the bounds of the SnapStart timeline requires acknowledging the Coordinated Restore at Checkpoint (CRaC) paradigm, actively standardized into the OpenJDK distributions natively supporting AWS lambda variants.

Engineers possess the explicit ability to write logical event hooks bridging the boundary between the “pre-snapshot” configuration block and the eventual “post-restoration” execution sequence.

Consider a scenario where the application establishes an intensive, tightly bonded connection pool structure to an Amazon Aurora PostgreSQL database instance. If the configuration connects during the initial snapshot sandbox invocation, that established TCP connection will structurally snap and freeze. When the Lambda awakens dynamically 72 hours later to process client traffic, those TCP database sockets will overwhelmingly have timed out and terminated upstream, resulting dynamically in a rigid `SocketTimeoutException` array.

import org.crac.Context;

import org.crac.Core;

import org.crac.Resource;

public class DbConnectionManager implements Resource {

private Connection pool;

public DbConnectionManager() {

// Register this specific class dynamically with the JVM CRaC engine

Core.getGlobalContext().register(this);

}

@Override

public void beforeCheckpoint(Context<? extends Resource> context) throws Exception {

// This fires microseconds BEFORE AWS mathematically freezes the microVM architecture

System.out.println("Halting system: Gracefully closing database sockets to prevent staleness.");

if (pool != null) pool.close();

}

@Override

public void afterRestore(Context<? extends Resource> context) throws Exception {

// This fires microseconds AFTER the container awakens dynamically from the memory baseline

System.out.println("System Restored: Initiating fresh asynchronous database connection chains.");

this.pool = DriverManager.getConnection(System.getenv("DB_URL"));

}

}

Economics and Strict Operational Limitations

It is paramount to state that AWS SnapStart incurs unequivocally zero supplementary financial cost. You do not pay for the memory cache retention or the internal sandbox initialization permutations. It profoundly terminates the historic financial requirement to configure expensive Provisioned Concurrency models natively.

However, deployment pipelines inherently suffer minor operational friction:

- Versions are Required: SnapStart absolutely physically cannot function against the mutable `$LATEST` function pointer. Since the snapshot executes statically, you are algorithmically required to structure API Gateways or EventBridge routing configurations natively against explicitly “Published Versions” (e.g. `arn:aws:lambda:…:function:api:14`).

- Slow CI/CD Builds: The execution sequence where AWS intercepts the build, unpacks the zip file, sequentially parses dependencies, initializes the framework configuration, and dumps the memory map requires a significant interval (often between 1 and 3 minutes per version). Fast-iteration developers testing microscopic syntax patches generally must disable SnapStart locally within their `samconfig.toml` structure to avoid excruciatingly protracted testing compile cycles.

Key Takeaways

- The Cold Start penalty is mechanically over. Utilizing sophisticated hypervisor-level MicroVM abstractions, multi-second framework initializations natively circumvent all dynamic bottlenecks by streaming localized execution environments instantly out of cache arrays roughly under 150ms parameters.

- Entropy constitutes an existential vulnerability. Duplicating static memory graphs violently compromises heavily secured Pseudo-Random generators; mathematically identical container boot paths frequently output perfectly identical cryptographical keys and UUID outputs, necessitating extreme auditing and validation across static Java blocks.

- Utilize CRaC dynamically. Intercept and actively sever highly bound TCP connections and network handshakes dynamically via JVM `beforeCheckpoint` parameters prior to freezing the local code environment to perfectly dodge continuous operational timeouts.

- Adhere exclusively to explicit Versioning. AWS structures its execution parameters absolutely against specific Published Versions instead of the dynamic array scope pointing strictly towards the `LATEST` repository block index.

Glossary

- AWS Lambda SnapStart

- The profoundly engineered AWS abstraction allowing complex container architectures definitively avoiding initial loading penalties completely by statically loading pre-compiled encrypted functional memory states across executing host parameters natively.

- Firecracker MicroVM

- The highly isolated hypervisor technology fundamentally driving native Lambda arrays designed precisely exclusively guaranteeing absolute memory security between multiple hostile tenant processes executing logic natively against standard bare-metal arrays.

- CRaC (Coordinated Restore at Checkpoint)

- The programmatic OpenJDK interface specifically allowing developers explicit programmatic control resolving the specific mathematical boundaries distinctly occurring moments prior directly towards system hibernation contexts.

- Provisioned Concurrency

- The highly expensive legacy architectural execution model manually allocating and indefinitely sustaining distinct container deployments within an active warm memory capacity inherently to mathematically completely neutralize dynamic asynchronous initialization burdens natively.

References & Further Reading

- → AWS Lambda SnapStart Specifications— Official documentation containing specific Java profiling integrations, architecture requirements natively addressing memory limitations.

- → Starting Up Faster: Understanding SnapStart— The underlying mathematical breakdown and specific latency comparisons identifying specific boundary metrics scaling strictly vertically.

- → OpenJDK CRaC Project Pipeline— The primary documentation mapping explicitly required implementation overrides resolving secure entropy distribution limitations completely.

Discover more from C4: Container, Code, Cloud & Context

Subscribe to get the latest posts sent to your email.