Introduction: AWS re:Invent 2023 delivered transformative announcements for enterprise AI adoption, with Amazon Bedrock reaching general availability and Amazon Q emerging as AWS’s answer to AI-powered enterprise assistance. These services represent AWS’s strategic vision for making generative AI accessible, secure, and enterprise-ready. After integrating Bedrock into production workloads, I’ve found its model-agnostic approach and native […]

Read more →Month: August 2024

Guardrails and Safety for LLMs: Building Secure AI Applications with Input Validation and Output Filtering

Introduction: Production LLM applications need guardrails to ensure safe, appropriate outputs. Without proper safeguards, models can generate harmful content, leak sensitive information, or produce responses that violate business policies. Guardrails provide defense-in-depth: input validation catches problematic requests before they reach the model, output filtering ensures responses meet safety standards, and content moderation prevents harmful generations. […]

Read more →Rate Limiting for LLM APIs: Token Buckets, Queues, and Adaptive Throttling

Introduction: LLM APIs have strict rate limits—requests per minute, tokens per minute, and concurrent request limits. Exceeding these limits results in 429 errors that can cascade through your application. Effective rate limiting on your side prevents hitting API limits, provides fair access across users, and enables graceful degradation under load. This guide covers practical rate […]

Read more →LLM Security: Understanding Prompt Injection, Jailbreaking, and Attack Vectors (Part 1 of 2)

A comprehensive guide to securing LLM applications against prompt injection, jailbreaking, and data exfiltration attacks. Includes production-ready defense implementations.

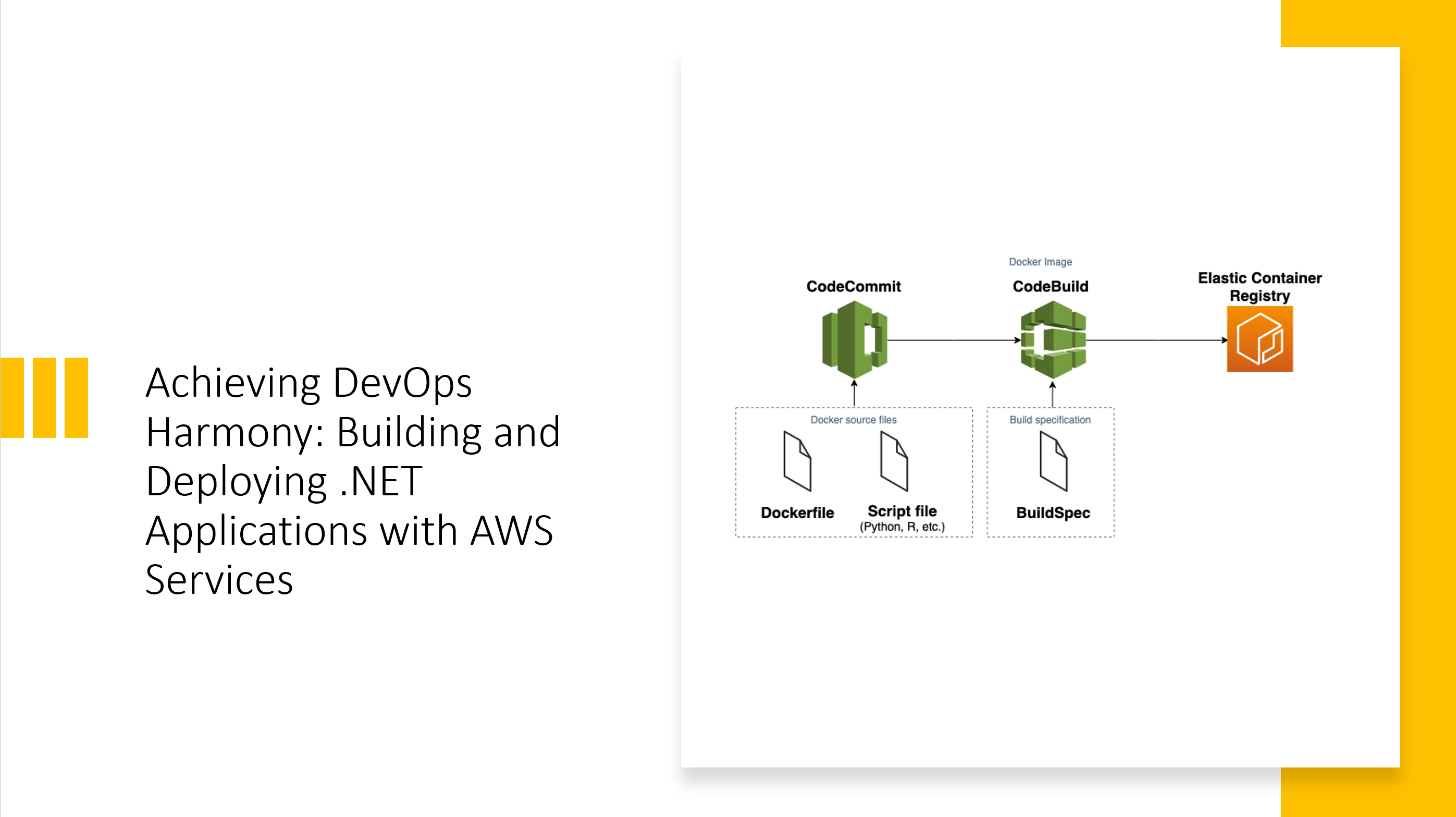

Read more →Achieving DevOps Harmony: Building and Deploying .NET Applications with AWS Services

The Evolution of .NET Deployment on AWS After two decades of building enterprise applications, I’ve witnessed the transformation of deployment practices from manual FTP uploads to sophisticated CI/CD pipelines. When AWS introduced their native DevOps toolchain, it fundamentally changed how we approach .NET application delivery. The integration between CodeCommit, CodeBuild, CodePipeline, and ECR creates a […]

Read more →LLM Batch Processing: Scaling AI Workloads from Hundreds to Millions

Introduction: Processing thousands or millions of items through LLMs requires different patterns than single-request applications. Naive sequential processing is too slow, while uncontrolled parallelism hits rate limits and wastes money on retries. This guide covers production batch processing patterns: chunking strategies, parallel execution with rate limiting, progress tracking, checkpoint/resume for long jobs, cost estimation, and […]

Read more →